For my last post about PrivateGPT I need to install Ollama on my machine.

The Ollama page itself is very simple and so is the instruction to install in Linux (WSL):

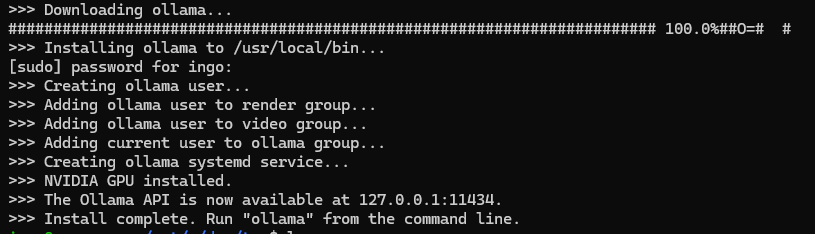

curl -fsSL https://ollama.com/install.sh | sh

ollama serve Couldn't find '/home/ingo/.ollama/id_ed25519'. Generating new private key. Your new public key is: ssh-ed25519 AAAAC3NzaC1lZDI1NTE5AAAAIGgHcpiQqs4qOUu1f2tyjs9hfiseDnPfujpFj9nV3RVt

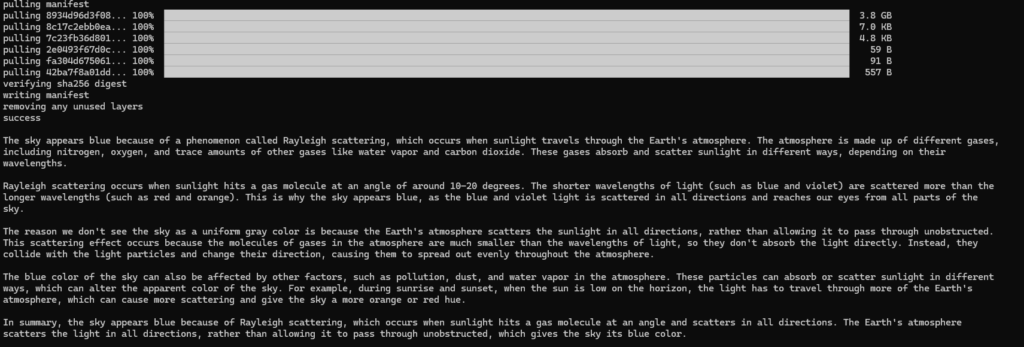

ollama run llama2 "why is the sky blue"

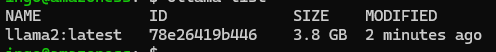

ollama list

curl 127.0.0.1:11434 Ollama is running

OK, now pull the files we need for the PrivateGPT installation:

ollama pull mistral ollama pull nomic-embed-text

Information about Ollamas Model Library is here.

IP Problem

Ollama is bound to localhost:11434.

So Ollama is only available from localhost or 127.0.0.1, but not from other IPs, like from inside a docker container.

There is already a feature request for this issue.

Meanwhile we have to do a workaround:

export OLLAMA_HOST=0.0.0.0:11434 ollama serve

Test with local IP:

export DOCKER_GATEWAY_HOST="`/sbin/ip route|awk '/dev eth0 proto kernel/{print $9}'|xargs`"

curl $DOCKER_GATEWAY_HOST:11434

Ollama is running

2 replies on “Ollama”

[…] Installation of Ollama is written in next Post. […]

[…] has to be installed. See this post or Ollama […]