Auf die zuvor erstellten EC2 Instanz soll ein "menschenlesbarer" Domainname den Zugang erleichtern.

Ich möchte im AWS Ökosystem bleiben und daher die Domain über Amazon Route 53 registrieren. Ansonsten hätte ich vielleicht einen anderen Anbieter gewählt, wie ich es schon für eine günstige Website gemacht hatte.

Wahl des Domainnamens

Das Projekt wird, zumindest auf meiner Infrastruktur, mutmaßlich nicht allzulange bestehen bleiben. Eine große Marktrecherche für einen tollen Namen brauche ich daher nicht, nur einprägsam sollte er sein.

Die wichtigste Anforderung ist ein günstiger Preis.

Die Preisübersicht auf der AWS Seite ist nicht sonderlich übersichtlich, eine "route 53 cheapest domain" zu googeln brachte aber auch nur den Link auf ein PDF zu Tage. In dem steht uA der "Registration and Renewal Price" und der ist für den TLD Namen "click" mit 3 Dollar am günstigsten.

Allerdings ist "click" nicht der beste Name im deutschsprachigen Raum: "Hey, besuch doch mal meine Seite meineApp.click" "Ich kann meineApp.klick nicht finden".

Der zweitgünstigste TLD Name mit 5 Dollar ist "link". "link" ist mir lieber als "click" und ist von der Preisdifferenz vertretbar.

Nach kurzem Brainstorming habe ich mich dann für den Namen "freigabe" und der TLD "link", also http://freigabe.link entschieden.

Domainname registrieren

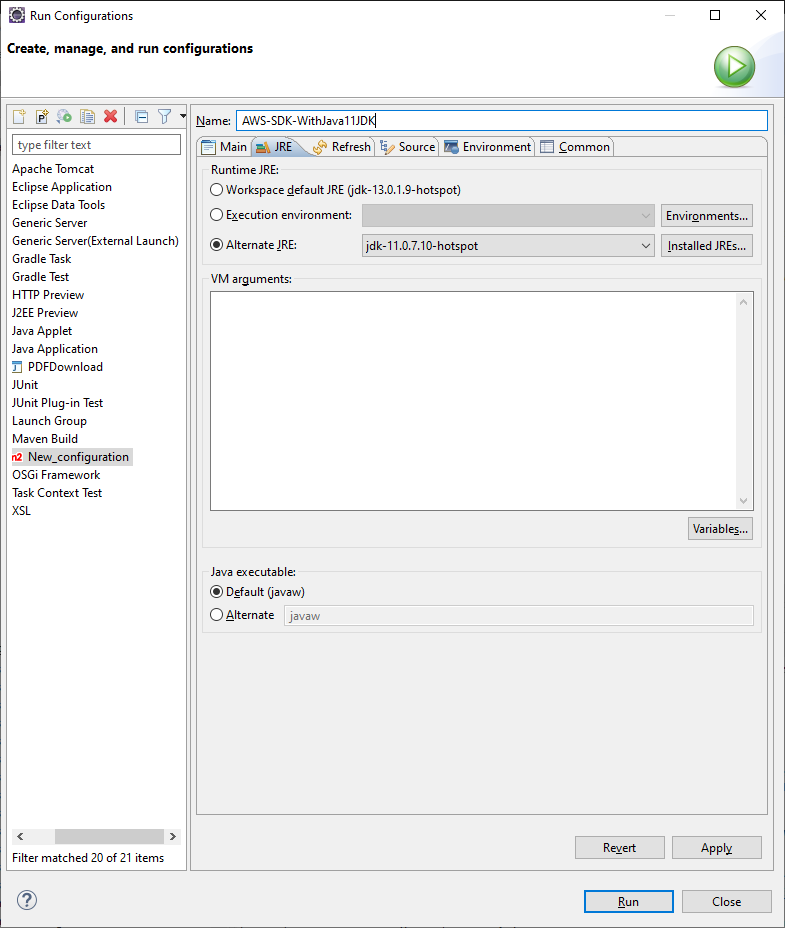

Auf die Seite des Dienstes Route 53 gehen und dort die "Domain registration" aufrufen und die gewünschte Domain eingeben:

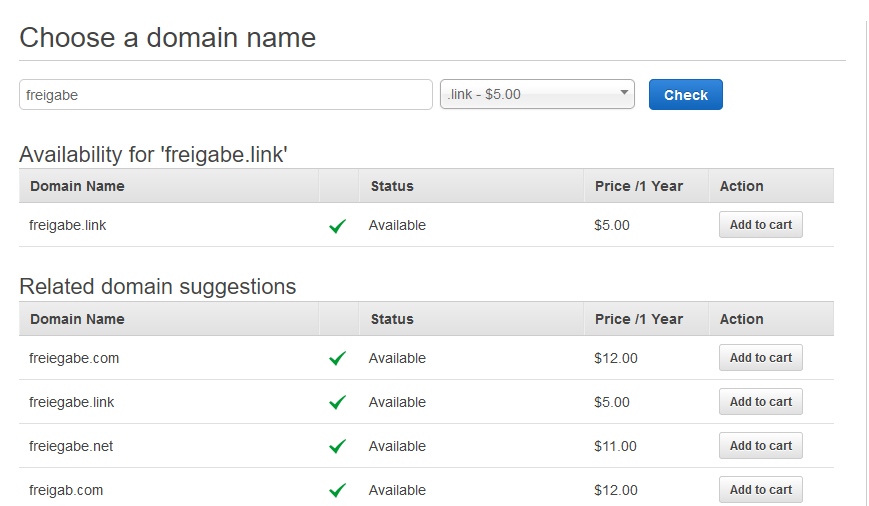

Ab in den Shopping cart und ... im nächsten Schritt muss ich meine Daten eingeben? Hey Amazon, die habt ihr doch schon!

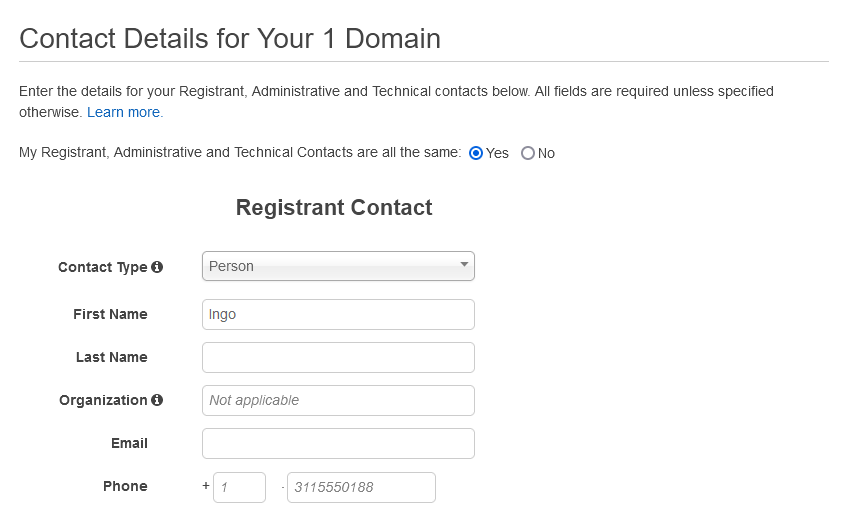

Anschließend wird die Domain auf mich registriert, was leider bis drei Tage dauern kann.

Bis zum Abschluss der Registrierung wird hier pausiert, anschließend geht es weiter mit der

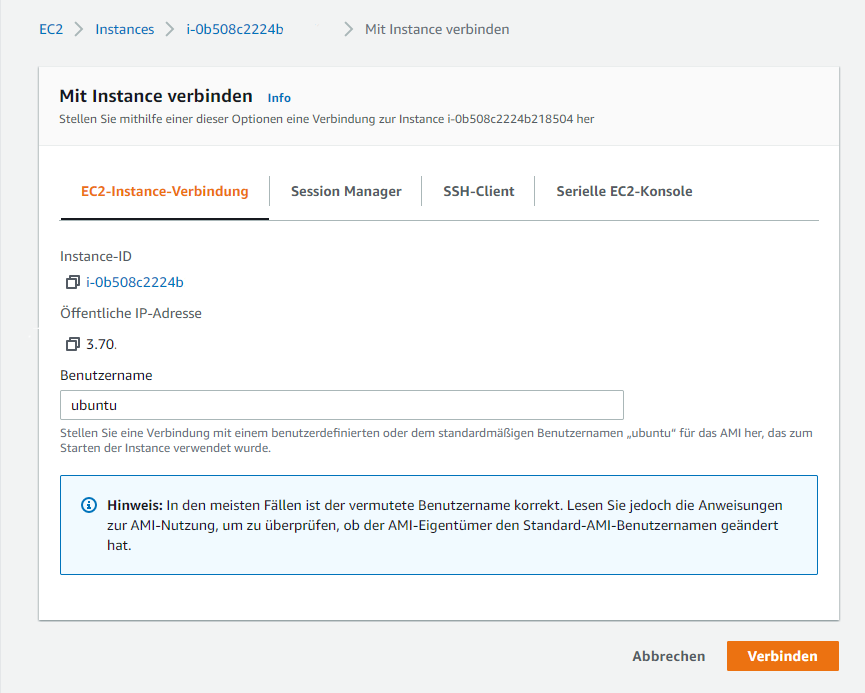

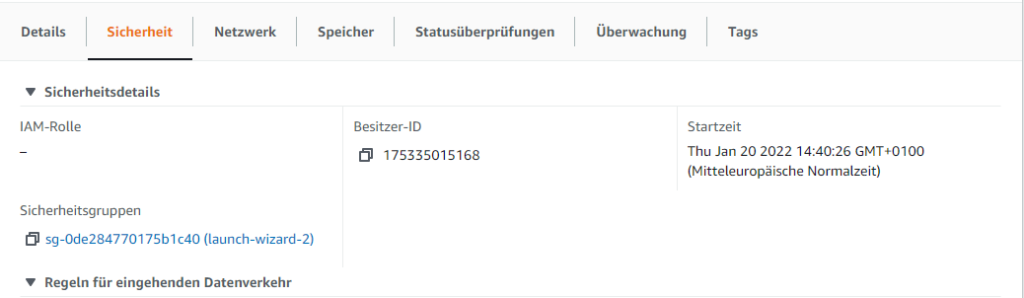

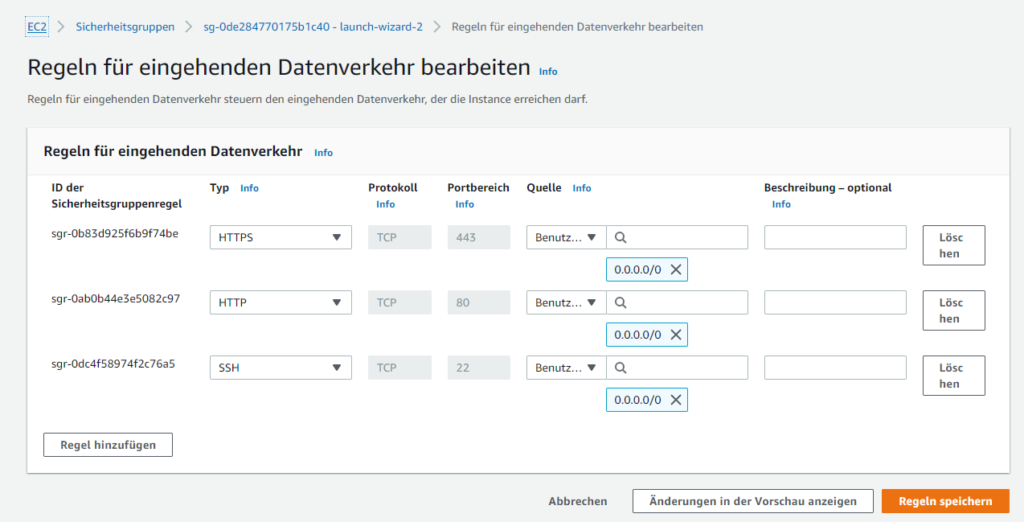

Anbindung Domain Name an EC2

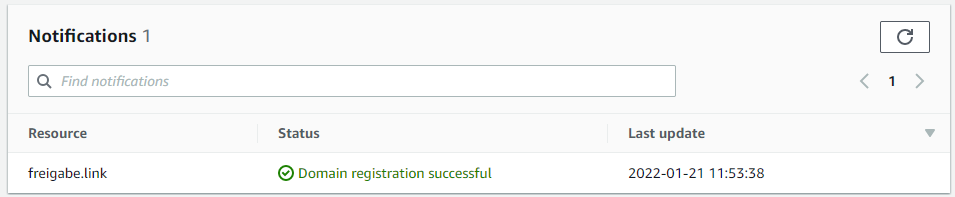

Die Registrierung der Domain war zum Glück bereits nach drei Stunden abgeschlossen und nicht erst nach drei Tagen. Negativ ist zu erwähnen, dass die 5 Dollar für den Domain Namen netto sind, also noch mal 19% USt hinzu kommen.

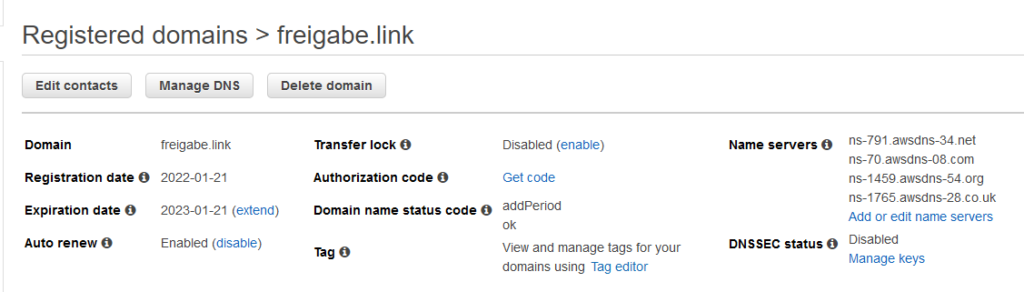

Auf der Route 53 Seite über Domains > Registered domains die Domain freigabe.link auswählen:

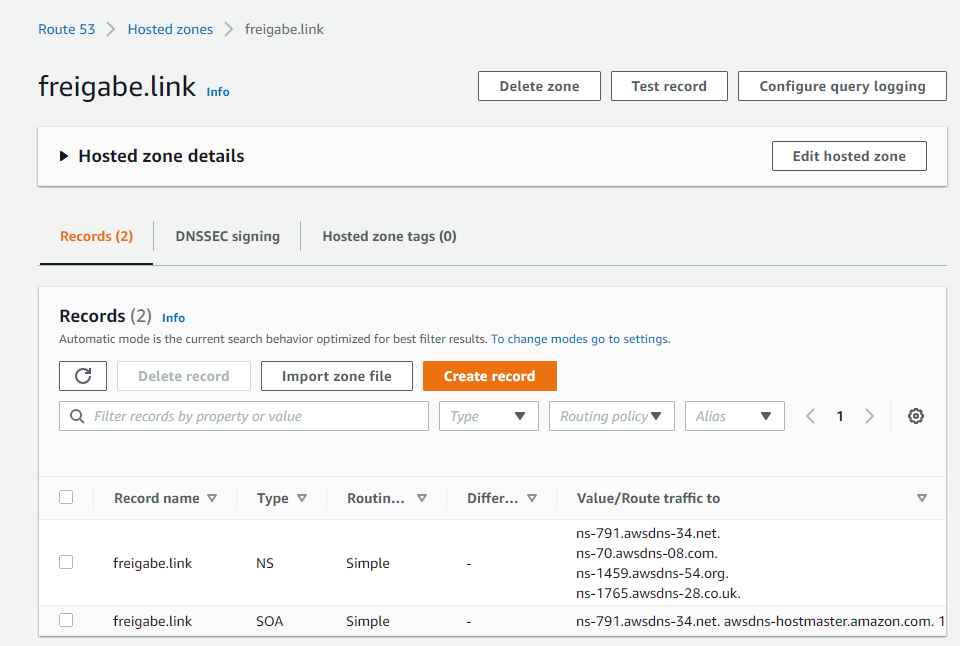

Über Manage DNS geht es in die Hosted zone der Domain:

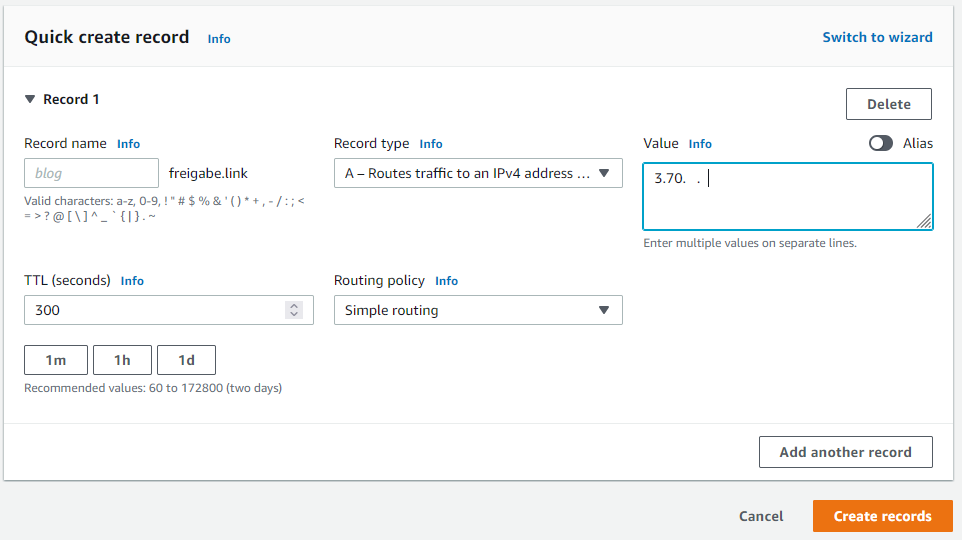

Über Create record wird der Eintrag gesetzt, dass der Domain Name auf die Public IP des EC2-Servers zeigen soll:

Nachdem ich den Web-Server gestartet hatte, funktionierte es auch sofort.

Der Web-Server war heruntergefahren. Ob ich das gestern noch gemacht hatte, weiß ich nicht mehr 100%ig.

In dem Catalina Log vom Tomcat fand sich uA folgender Eintrag:

Invalid character found in the request target [/index.php?s=/Index/\think\app/invokefunction&function=call_user_func_array&vars[0]=md5&vars[1][]=HelloThinkPHP21 ]. The valid characters are defined in RFC 7230 and RFC 3986

Vielleicht gab es zu viele dieser Hacking Versuche?

Als nächstes kommt noch ein Reverse Proxy davor, der kann noch etwas Traffic vom Tomcat fern halten.

Vielleicht werde ich aber auch noch eine WAF vor den Server setzen? Eine kurze Recherche zu dem Thema ergab allerdings, dass das nicht direkt möglich ist, sondern ein Application Load Balancer oder CloudFront zwischengeschaltet werden muss.

Ein Nebenschauplatz ist das Thema email, so dass ich Mails an diese Domain empfangen bzw. versenden kann.

Das Thema ist leider nicht ganz so simpel gelöst, wie ich es mir erhofft hatte. Einen simplen "AWS Mail Service", den man über Route 53 konfigurieren kann, gibt es nicht. Es gibt mit Amazon Workmail eine SaaS Lösung mit Focus auf Unternehmen und entsprechender Kostenstruktur.

Weiterhin wird Google Apps verschiedentlich empfohlen, aber auch das ist mit Kosten verbunden und wird nicht über die kostenfreien Angebote abgedeckt.

Eine SES / S3 Lösung deckt nur rudimentär den Bedarf, zB werden die Mails als Dateien auf einem S3 Bucket gespeichert. Da scheinen auch noch andere Konstellationen möglich zu sein, aber keine, die überzeugt.

Als kostenfreie WebMail-Lösung wird zB Zoho empfohlen. Eine Anleitung findet sich zB hier.

Es wäre natürlich auch möglich, einen eigenen WebMail-Server auf einem eigenen EC2 Server zu betreiben.

Als Mittelweg wäre auch ein weiter Docker Container auf dem vorhandenen EC2 Server möglich.

Komplettlösungen als Mailserver wären zB Mailcow, Mailu oder Kopano.

Jede Lösung ist mit mehr oder weniger Aufwand realisierbar, aber jede Lösung ist aufwändiger als meine momentane Motivation, oder aktueller Bedarf, und so setzte ich das erstmal auf die "wenn mal Zeit ist"-Liste.